In the last post, I described an epistemic bargaining problem, and applied Nash’s solution to it. That gave me a characterization of a compromise “team credence”, as that credence which maximizes a certain function of the team members’ epistemic utilities. This post now describes more directly what that team credence will be (for now, I only consider the special case where the team credence concerns a single proposition). Here’s the TL;DR: so long as two agents are each sufficiently epistemically motivated to find a compromise, then they will compromise on a team credence that is the linear average of their individual credences iff they are equally motivated to find a compromise. If there is an imbalance of motivation, the compromise will be strictly between the linear average and the starting credence of the agent who is less motivated by finding the team credence.

To recap, we had a set of team members. These team members each assign “expected epistemic utility” to possible credal states (including credal states that concern only attitudes to the single proposition p). Expected epistemic utility is assumed to be the expected accuracy of the target credal state x, and inaccuracy is assumed to be measured by the Brier score if p is true,

if p is false (to get a measure of accuracy, subtract inaccuracy from 1). For an agent whose own credal state is a, it’s a familiar piece of bookwork to show this implies that the epistemic utility of a credal state x(p) is

—one minus the square euclidean distance between the agent’s own a(p) and the target credence x(p). From now on, I’ll drop the indexing to p, since only one proposition will be at issue throughout.

The epistemic decision facing the several agents is this: they can form a team credence in p with a specific value, so long as all cooperate. But if any one dissents, no team credence is formed. The situation where no team credence is formed is assumed to have a certain epistemic utility for the epistemic utility for agent A (who has credal state a). So forming the specific team credence x will be worthwhile from A’s perspective if and only if the epistemic utility (expected accuracy) of forming that team credence is greater than the default, i.e. iff

. Now, we may be able to find a possible credal state $d_a$ which the agent ranks equally with the default credal state,

. You can think of

as A’s breaking point—the credence at which it’s no longer in her epistemic interests to form a team credence, since she’s indifferent between that and the default where no team credence is formed. A little rearrangement gets us to:

. So really we have both an upper and lower breaking point, and within these bounds, a zone of acceptable compromises, within which a team credence will look good from that agent’s perspective.

Possible credences will be, as is standard, within the interval .

may well be outside that interval. Consider, for example, the case where an agent has credence 1 or 0 in p to start with, or a situation where not forming a team credence is a true epistemic disaster, of disutility >1. It’ll be formally convenient to still talking of breaking point credences in these cases, but that’ll just be a manner of speaking.

A precondition of having a bargaining problem is that there are some potential team credences x that all team members prefer to have team credence x than the scenario where no team credence is formed. That is, for all y in the group, . That amounts to insisting that the set of open intervals

have a non-empty intersection, or equivalently, where y runs over hte team members,

.

That’s a lot of maths, but concretely you can think about it like this: it’ll be harder to strike a compromise on p the more distant the team member’s credences are from each other. If, however, each feels great urgency to finding a team credence, that will widen the zone of compromise from their perspective. So even if A starts with credence 1 in p, and B starts with credence 0 in p, then they will each view some compromises between the two of them as acceptable if A’s lower breaking point is higher than B’s upper breaking point. That relates to the utility/disutility of failure: if failure for A is worse than the epistemic cost of having credence 0.5 in p, and similarly for B, then credence 0.5 is a potential compromise for the two of them.

So these are the conditions for there to be a bargaining problem in the first place. If they are met, everyone wants a deal. The question that remains is which among this set they should pick. As described in the last post, if we endorse Nash’s four conditions we have an answer: the team credence will be the x that maximizes the following quantity: . Simplifying a touch, the latter curve becomes

.

Before moving on, I do want to make one observation that will be important later: that the Nash compromise team credence is somewhere in . This is pretty intuitively obvious, but to argue for it formally: suppose that we have N agents with various starting credences, whose span is the interval

. If the upper endpoint b is not within the intersection of the zones of compromise, then nothing above b is within this intersection either (since those constraints take the form of an intersection of open balls around points no greater than b). On the other hand, suppose that the b is contained within the intersection of all the agents’ zones of compromise. Then we can see that the Nash product cannot take its maximum value above b. That’s because b will be nearer all the starting credences than anything above b, and so every multiplicand (and hence the product) will be greater at the endpoint than it is at any point above it. The same goes, in reverse, for the other endpoint a.

So what does Nash’s maximization condition say about specific cases? One complicating factor is that this is a constrained maximization problem, over the set . So looking at the curve defined by Nash’s product alone doesn’t contain all the information we need to pick the constrained maximum. I’ll come back to this at the end, but for now, I’ll ignore the issue and concentrate on finding a local maximum of the Nash curve.

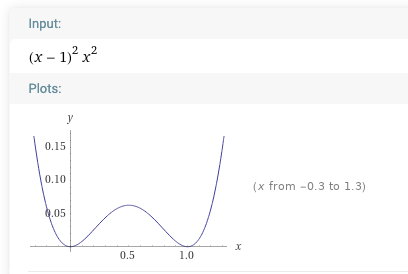

Let’s get going with the local maximization problem then, for the two-agent case. We want to find a turning point of the Nash curve . To see what’s going on with this quartic polynomial, consider a special simple case with a at 1 and b at 0, and both offsets at zero. That gives

, which I’ve sketched for you (as with the other images that follow) using wolframalpha:

This polynomial has roots at 0 and 1—and every candidate team credence of course has to be within this zone. And so you can see immediately that the leading candidate to be the compromise credence will be the local maximum of this curve. To find the value of the maximum, we remember secondary school maths and set the derivative of the curve equal to zero.

Note we can factorize the derivative as . The cubic has three roots: 0 and 1 (those are the minimum points of the original quartic curve) and 0.5.

This looks promising! Interpreting this in the epistemic bargaining way, we have started with two agents with extremal credences 1 and 0, and found that we have a local maximum for the Nash curve at their linear average. Further, this is representative of a general pattern. If you start from ,

then you get curves that look like distorted versions of the above, but with roots of the original curve at a and b, and a local maximum at

—the linear average of the starting credences.

Things look even better when we recall that the maximum value of the Nash curve *for values of x that met the relevant constraints* was going to have to be within the interval spanned by the starting credences. For that tells us we can ignore all the curve except the bit between 0 and 1 in the extremal case (but we knew that anyway!) and between a and b in the general case. The arms of the original curve that shoot off to infinity can be ignored, therefore—and that’s one big step towards arguing that the local maximum (the turning point we’ve just calculated in this special case) is the point satisfying Nash’s conditions.

Now, this is all very well, except for the annoying fact that the simple case in question was one where , which translates to both agents having a null zone of compromise (in terms of breaking points: the breaking point credence for each agent is the point at which they’re at). There’s no non-trivial bargaining problem at all here! So that there’s a local maximum of the curve at the linear average doesn’t tell us anything of interest. Bother.

But the general case can be understood in relation to this one. Again starting from extremal credences for simplicity (A having credence 1 in p, B having credence 0 in p), the non-trivial bargaining problems will take the form . Multiplying this out we have:

. Differentiating this and setting the result to zero we have

. This is equivalent to finding the intersection of the cubic sketched above and the linear curve

. To illustrate, here’s a sketch of what happens when both parameters are set to 1. The intersection, and so the local maximum of the original curve, is the average of the two credences, at 0.5:

Below is the case where but

. Note that the intersection is now below the linear average of the two starting credences (that makes sense: the parameters tell us that A, with full credence, is more distant from her breaking point than B, who has zero credence—or equivalently, failing to agree a team credence is in relative terms better for B than for A. So A is in the weaker bargaining position and the solution is more in line with B’s credence):

And below is what happens if we reverse the parameters, with the intersection point, as expected, nearer to the agent with less to lose, in this case, A (who had full credence):

What of the general two-agent case, where the equation for which we’re finding the local maximum is ? This time I’ll run through it algebraically. Assume without loss of generality a>b. From a note earlier, we know the compromise credence is to be found in the interval

. So under what conditions is it in the top half,

? At the bottom half

? And at the midpoint

?

First, multiply out: . Second, differentiate the quartic and set the result to zero. This gives us

. This is equivalent to finding the intersection of the cubic

and the linear curve

. The former, recall, is like a squished version of the the earlier cubic, with roots at

–the middle root corresponding to the local maximum.

Now, you can eyeball the curve sketches above to convince yourself that the following biconditional: the intersection of the cubic and linear will be at an x-value within iff the linear curve intersects the x-axis within the same interval. Inverting the linear equation, we find that its intersection with the x-axis will be

. That will be within the interval only if

, which is to say:

. Multiply out, simplify while remembering we were assuming a>b, and you will find this is equivalent to:

. (Interpretation: the epistemic utility of no team credence is higher for A (at

) than for B (at

)).

By similiar manipulations, we can show that the intersection of the two curves lies within only if

. And the two curves intersect at

iff

. And given the curves intersect somewhere in the interval [b,a], and the three conditions are mutually exclusive, we can now strengthen these two conditionals to biconditionals.

To summarize: Maximizing the Nash curve over [a,b] to pick a compromise team-credence gives the linear average when the epistemic utility of failing to reach a team-credence is the same for both parties. When there is an imbalance in the epistemic utilities of failure, if we pick the team credence in the same way, we’ll get a result that is nearer the starting credence of the agent with less to lose from failure.

All this comes with the caveat mentioned earlier: I’ve been talking about how to find the maximum of the Nash curve over the whole of [a,b]. We need to also remember that we were to find the maximum over a constrained set of credences, and this might be a proper subinterval of [a,b]. At the limit, as we saw earlier, they may be no credences meeting the constraints at all. So it’s not guaranteed (yet) that the Nash compromise in all (two person, one proposition) cases satisfies the description given above. But it will meet that condition if the zones of compromise are big enough: if they are big enough that [a,b] is contained within them.

That’s enough for today! The next set of questions for this project I hope are pretty clear: Is there more to say about the case when constraints are a proper subinterval of [a,b]? How does this generalize to about the N-person case? How does this generalize to a multiple proposition case? How does it generalize to scoring rules other than the Brier score?

P.S. Thanks to Seamus Bradley and Nick Owen for discussion of this. As they noted, you can use computer assistance to find exact roots for the cubic and so the turning point of the quartic. Unfortunately, those exact roots look horrific, which pushed me towards the qualitative results reported above. I include the horror for the sake of interest, with C and D being the delta terms for a and b respectively:

P.P.S. Some further notes about the next set of questions.

(i) On the issue of when we have a well defined bargaining problem. For the two-person case, the following holds: there is a non-trivial bargaining problem when . In the special case where

, that means

. The compromise zone is maximal, i.e. the whole of

iff

.

The following graphical characterization was illuminating to me. In general, the quartic has a “W” shaped curve. For very negative values of x, then both

and $(\delta_B-(x-b)^2)^2)$ are large and negative, and so their product is large and positive. For very positive values of x, both are large and again negative, so their product is large and again positive. If all roots are real, then moving from left to right, as x approaches a we get an interval where

is positive and the other negative, then an interval where both are positive, and then a period when only $(\delta_B-(x-b)^2)^2)$ is positive, before both turn negative. Now note that the middle interval exactly corresponds to the values for which both agents have positive utility, and so is exactly the zone of compromise. So another characterization of the compromise zone is the area between the middle two roots of the quartic (if those roots are imaginery, there is no bargaining problem). This is important, because it illuminates why finding the local maximum is the right method–it’s because the constraints are that we only maximize in that specific interval between the middle two roots, and the maximum subject to that constraint is exactly the local maximum.

(ii) For the 3-person case, we can consider constraints and maxima. For the former, it’s a necessary condition that the most confident and least confident individual overlap, and so if we designate those a and b, we again need the following for there to be a non-trivial zone of compromise: . In addition, however, we need the zone around c to overlap this, which is a complex three case condition involving c and $latex\delta_C$. If c is at the midpoint of a and b then any nonzero

will do. Likewise, it is necessary for the compromise zone to be maximal that

, and then there is a more complex condition involving c and

.

suffices, but e.g. if c is the midpoint then a smaller

will do. Something similar happens with the general N-person case—that the zones of compromise of the extremes overlap is a necessary condition, and then there’s an array of more complex conditions for those whose credence lies between the two extremes.

The N-person case involves us finding a maximal point of a polynomial of degree 2N. The new challenge here is that there are multiple local maxima—which can all be between 0 and 1, in principle. The generalization of the point made earlier is now crucial to understand what is happening. Suppose all roots are real As we scan from left to right, we start from a point where all the multiplicands (epistemic utility difference of agents) are negative, and gradually hit points where more and more agents have positive epistemic utility difference (toggling the sign of the product, i.e. the curve, from positive to negative and back again), until eventually all multiplicands are positive. Then we continue to scan to the right more and more turn negative. The key observation is the zone of compromise for all agents are those points at which all agents have positive utility, which is the middle hump of the serpent’s back. The conditions for this existing, and for being maximal, i.e. covering , are given above. But a crucial observation is that the maximization problem is now well defined: we need to find the local maximum of this middle hump.

What are the compromise credences in these cases? Well, here’s one special case that is as you’d expect: if c is at the average of a and b, and the threatened losses are the same for a and b, then the compromise team credence will be c. If c is nearer to a than b, the credence is nearer to a than b, and vice versa. If the threatened loss is bigger for a than b, then the compromise is nearer b (if c is at the average). How these trade off, and the N-person generalization, will require more work. There’s some attractive initial hypotheses that fail. For example, in the two person case, as reported above, when the potential losses are equal, the compromise is the average. But the natural generalization of this already fails in the three person case: when two agents have credence 1 and a third has credence 0, with all relevant deltas being set to 1, the predicted compromise credence is 0.602, less than the arithmetical mean of 0.666…

(iii) For the M-proposition case: we consider a credence function c over M propositions to be a point in M-dimensional space, and map that by the Brier score to an epitemic value of that credence given a truth value assignment, the expected utility of which relative to credence b is the square euclidean distance between b and c. So a compromise team credence function becomes a maximization problem of the surface defined by the Nash product in that M-dimensional space. Now, curve sketching in WolframAlpha raised my confidence that you solve this maximization problem by solving the maximization problems for each of its one dimensional projections (evidence: when the threat points are the same for each person, then the solution is the linear average, just as we found above). But I can’t right now give a general argument for this. I presume a bit of knowledge about differential geometry should make this conjecture pretty easy to support or refute.

(iv) I haven’t thought about other measures of accuracy yet.

You must be logged in to post a comment.