In reading up for my new project on Group Thinking, I’ve found that people attaching a certain label to a view of the metaphysics of group belief and desire that I find quite attractive. That label is “functionalism”. I’ve found myself very confused about what that common label means, so what follows is where I’ve got to in sorting that out.

Now, at a really rough level, I expect anything deserving the name “functionalism” to have at least two theoretical categories: roles and realizers. For example, if you’re going to be a functionalist about the property being in pain, you’ll be committed to (i) the idea that there is a functional role associated with pain; (ii) if anything is to be in pain, then it needs to have a realizor property i.e. to instantiate a property that plays the functional role.

That allows us a lot of flexibility on how we flesh out the details beyond this. We might have various accounts of what sort of theories of functional roles to give. We might have various accounts of what the realization relation is—and whether we need to allow for multiple realisors, imperfect realizers, etc etc. We might differ in whether we identify the original property of being in pain with the role, the realizor, or something else. But unless we have an account that has the two part structure, it isn’t functionalism as I was taught it or as I teach it.

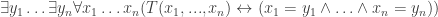

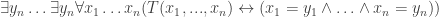

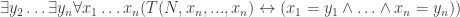

Okay, with that as the setup, let me say something about the kind of functionalism that I understand best. This starts with Lewis’s story about how to find explicit definitions of theoretical terms. We start with a theory that neologizes—that introduces a set of terms for the first time. That theory will also reuse some old vocabulary. Lewis assumed that the theory is regimented so that all the new terms are names. The old vocabulary will include predicates like “…has the property…” or “…stands in relation …. to …”, if necessary, so that we can do the work of new predicates by means of new names for the relevant properties. If we start with a theory  , where

, where  are the old terms, then the following is the unique-realization sentence for T:

are the old terms, then the following is the unique-realization sentence for T:

The following one-place predicate is then what we’ll mean by “the theoretical role of  “, or the “

“, or the “ “-role:

“-role:

The explicit definition of the new terms in old vocabulary that Lewis offered was just as the property that played the relevant theoretical role. Using an iota for the definite description operator, for  the definition is:

the definition is:

Informally, the definition says that  is the property that plays the

is the property that plays the  -role.

-role.

Now, Lewis proves several nice results about these definitions and their relation to the original theory  , using a certain understanding of the definite description operator. I won’t get into that here.

, using a certain understanding of the definite description operator. I won’t get into that here.

One last thing that will be important: the definite description on the right hand side of the definition sentences is, in general, a non-rigid designator. Since  may be uniquely realized by definite tuples of properties in different worlds, the definite description will in general pick out different properties at different worlds. And sometimes—with empirical investigation—we will be able to say something informative about the property that happens to be picked out at the actual world. For some name

may be uniquely realized by definite tuples of properties in different worlds, the definite description will in general pick out different properties at different worlds. And sometimes—with empirical investigation—we will be able to say something informative about the property that happens to be picked out at the actual world. For some name  in our old vocabulary, rigidly designating a property, we may discover:

in our old vocabulary, rigidly designating a property, we may discover:

From this and the definition sentence, it will follow that:

So here we have a model for how the identification of new theoretical terms with old, familiar terms could go. In these circumstances we would call  the realizer of the $t_1$-role at the actual world. In general,

the realizer of the $t_1$-role at the actual world. In general,  will be the realizer of this role at world w iff the following holds at w:

will be the realizer of this role at world w iff the following holds at w:

It’s up for debate whether  is a rigid or non-rigid designator. If it’s a rigid designator, then

is a rigid or non-rigid designator. If it’s a rigid designator, then  will be necessary if true, but the definition sentence will be contingent (presumably, an example of the contingent a priori).

will be necessary if true, but the definition sentence will be contingent (presumably, an example of the contingent a priori).  could equally be taken to be non-rigid, allowing the definition sentence to be necessarily true (as well as apriori). In that case,

could equally be taken to be non-rigid, allowing the definition sentence to be necessarily true (as well as apriori). In that case,  will be non-rigid (as well as a posteriori). It seems we could go either way on this, consistent with the rest of the framework.

will be non-rigid (as well as a posteriori). It seems we could go either way on this, consistent with the rest of the framework.

I’ve introduced both role and realizer terminology in connection to the Lewis account of the definitions of theoretical terms. It is the model for how I understand role and realizor terminology in the context of functionalism. However, discussion of theoretical neologisms is one thing, and discussion of “functional” vocabulary is another. Lewis’s topic in “how to define theoretical terms” is the former, and comes, and that gives us a particular take on the way that theory and definition sentences relate. For Lewis, the definitions are “implicitly asserted” when we put forward  as a term-introducing theory—presumably we’re doing something that’s equivalent to stipulating that they are to be (a priori) true. This is not an account that can be directly applied to terms—theoretical or otherwise—that are already in common currency. It is not an account, for example, of “pain”. In the case of pain, if “definitions” are to be offered, they have to be offered as a product of analysis, not as the product of stipulation.

as a term-introducing theory—presumably we’re doing something that’s equivalent to stipulating that they are to be (a priori) true. This is not an account that can be directly applied to terms—theoretical or otherwise—that are already in common currency. It is not an account, for example, of “pain”. In the case of pain, if “definitions” are to be offered, they have to be offered as a product of analysis, not as the product of stipulation.

Let’s turn, therefore, to a context where we are working only with terms that are already common currency. And let’s suppose that we have found a theory  , such that for a suitable set of target vocabulary

, such that for a suitable set of target vocabulary  , both

, both  and the unique realization sentence is true. The following will be true:

and the unique realization sentence is true. The following will be true:

We shouldn’t call these “definition sentences” since it’s not clear in what sense if any they are “definitions”. To highlight this, note that as a limiting case, our “theory” could simply consist in saying “Red is Arnold’s favourite colour”, with Red as the target vocabulary . The unique realization sentence is then that there is an y such that for all x, x is Arnold’s favourite colour, iff x=y—which is true enough. And the putative “definition sentence” would say: Red is the y such that for all x, x is Arnold’s favourite colour iff y=x. But though this is is a true identity, this is quite clearly not a “definition” of the term Red, and is obviously contingent and a posteriori.

Not any old uniquely-realized theory of target old vocabulary will do, therefore. I take it that the step to an “analytic” functionalism of a Lewisian sort imposes the following constraint: we take an analytic/apriori  . Now if, in addition, the unique realization sentence for this vocabulary is analytic/apriori, then the “definition sentences” will be analytic/apriori. Even if the unique realization sentence is not analytic/apriori, then the conditional whose antecedent is the unique realization sentence and whose consequent is a definition sentence will be analytic/apriori. So we could plausibly claim the definition sentences as “an analysis” of the relevant target vocabulary–perhaps an analysis modulo the assumption of unique realization. The conjecture, for the special case of analytic functionalism about pain, etc, will be that we could pull off this trick by letting T be systematization of a set of a priori “platitudes” that uniquely characterize the typical causal role of the property of being in pain in causing distinctive kinds of behaviour, and being caused by distinctive kinds of stimuli, and which interacts with other (targeted) mental states in typical kinds of ways.

. Now if, in addition, the unique realization sentence for this vocabulary is analytic/apriori, then the “definition sentences” will be analytic/apriori. Even if the unique realization sentence is not analytic/apriori, then the conditional whose antecedent is the unique realization sentence and whose consequent is a definition sentence will be analytic/apriori. So we could plausibly claim the definition sentences as “an analysis” of the relevant target vocabulary–perhaps an analysis modulo the assumption of unique realization. The conjecture, for the special case of analytic functionalism about pain, etc, will be that we could pull off this trick by letting T be systematization of a set of a priori “platitudes” that uniquely characterize the typical causal role of the property of being in pain in causing distinctive kinds of behaviour, and being caused by distinctive kinds of stimuli, and which interacts with other (targeted) mental states in typical kinds of ways.

The assumption that we can find an (a priori) theory T that does the job just described is a major one. But if we can do it, then we can import all the distinctions and terminology from the theoretical terms case. We will have a one-place predicate that is a “theoretical role” for the target term “pain”—which given the nature of the T we’re envisaging we could aptly call a causal-functional role of “pain”. We would be up for discovering that the role is satisfied by a property rigidly designated by some N—say, C-fibres firing. And we could reason, in the fashion Lewis and Armstrong taught us, from the “definition sentence” for pain, plus the putative empirical fact that C-fibres play the pain role, to an identification of the property of being in pain with having one’s C-fibres fire.

So that’s the way I understand analytic functionalism. And I can understand other forms of functionalism as variations on the theme. For example, we could start with a metaphysically necessary (but not analytic or a priori) theory which necessarily uniquely characterizes a set of target vocabulary, and extract definition sentences from it, obtaining necessarily true (but not analytic or a priori) “definition sentences” that we might go on to present as counting as “metaphysical analyses”. We could take a scientific theory—a theory which uniquely characterizes a set of target terms with nomic necessity, and then extract “nomic analyses”, and so forth. In each case, distinctive functionalist structure of role and realizer, and the relation between them, will be well understood. If functionalism is to be amended (e.g. to allow for imperfect realization, or non-unique realization) then I will want to figure out how to adjust the above theory to make the necessary changes.

It’s one thing to say that functionalisms can be represented as an instance of the how-to-define-theoretical-terms model of extracting definitions from theories. It’s quite another to say that every successful application of that model to common currency terms would be a functionalism. That further claim seems false to me.

For example, suppose we applied this kind of account to a term that for which we already have an analysis ready-to-hand: the property of being a bachelor. An a priori uniquely characterizing theory says (let’s suppose): bachelorhood is the property of being male and being umarried. So the “definition sentence” here is: bachelorhood is the y such that for all x, x is the intersection of being male and being unmarried iff y=x. What of the role and realizor properties here? The role property is being a y such that for all x, x is the intersection of being male and being unmarried iff y=x. What’s the realizer property?

Well, here’s a way of specifying a property that realizes the role in the minimal sense in which I introduced the terminology earlier: being a bachelor. Here’s another: the property that is the intersection of being unmarried and being male. But this seems dreadfully fishy. It doesn’t seem illuminating in the way typical identifications of realizors of functional roles would and should be. It might be true to say that pain realizes the pain role, and that the property of actually playing the pain role realizes the pain role. But in that paradigm case of functionalism what we are really interested in, and trust to be available, is some more illuminating characterization: e.g. that C-fibres firing plays the pain-role. And what we see from the bachelorhood case, I think, is that it’s entirely possible to apply all this analysis and for there to be no such illuminating identification of the realizor to be given at the end of the day.

To sum this up. In the paradigm cases of functionalism, we expect a two-step methodology. There’s first the step of identifying a relevant uniquely characterizing theory, from which by turning a crank we can extract “functional roles”. And then, we expect a second stage, where we or others do further non-trivial work (in the paradigm cases, empirical work) that gives us an illuminating way of identifying the realizors of those roles, using a vocabulary that differs from that used in characterizing the role itself. The realizors will be some relatively natural “kind” or natural enough property, relative to a somehow-privileged vocabulary. In the paradigm functionalisms, there’s also a suitable distance between the vocabulary used to specify the role, and the vocabulary used in the illuminating identification of the realizor.

Here’s a way of thinking about all this. There’s a genus-level notion of role and realizor here, which we find in functionalism, in understanding theoretical neologisms, and so forth. But in order to have a functionalism worthy of the name, we need more than such minimal roles and realizors—we need roles that are genuinely “functional” and which contrast sufficiently with their natural-enough “realizors”. That vague characterization is probably enough for us to get on with the hard work of finding examples that fit this bill.

But if this is the right way to think of things, then we should resist the thought that whenever we extract definitions from a theory in the Lewis-style, that we’re engaged in functionalist analysis. And I definitely want to resist the thought that in undertaking that first kind of project, we are committed to there being “realizors” of the theoretical roles used in those definitions in a more-than-minimal sense. Sometimes, perhaps, it will follow from the content of the characterizing theory that realizors of the roles will be more-than-minimal—e.g. perhaps that role is a causal one, and we are independently committed to thinking that only sufficiently natural properties can stand in causal relations. Perhaps part of the characterizing theory itself is the claim that the relevant property is natural enough. That might guarantee that if successful, the analysis will turn out to be a functionalist one. But this needs to be argued out on a case by case basis.

To go back to the beginning: when people talk about functionalist analyses of believing that p and desiring that q, whether in application to groups or individuals, I think that often what they’re picking out are definitions of belief and desire that are extracted from an overall theory of belief and desire in the “theoretical role” way. But it’s a huge step from that to assume that one is committed to full-blown functionalism about belief and desire, with its more-than-minimal realizors of the roles so-characterized. I think it’s misleading to label accounts that aren’t committed to more-than-minimal realizors as kinds of functionalism, and I think that’s one reason that I got myself puzzled at the way the terminology is (sometimes) used in this area.

You must be logged in to post a comment.